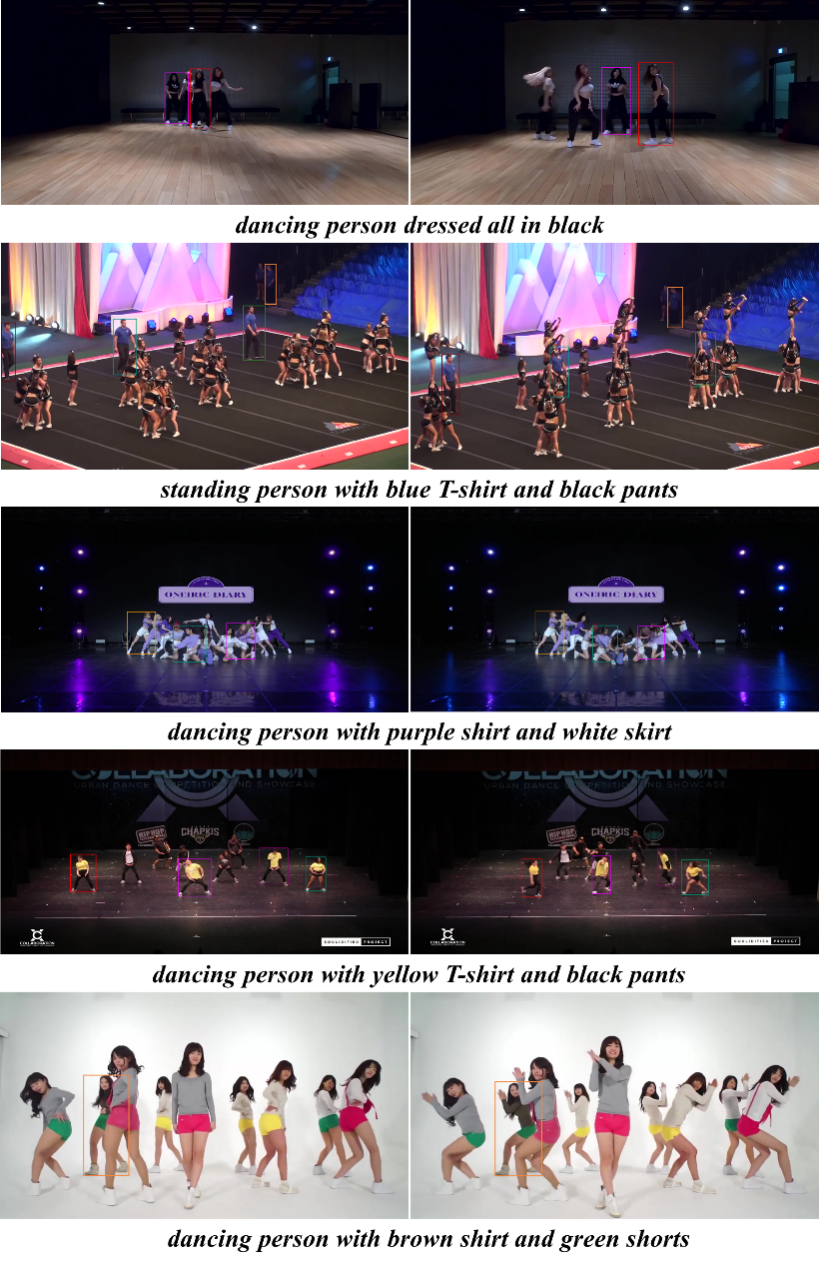

Refer-Dance is constructed for referring multiple object tracking, which aims to track corresponding objects according input descriptions. It is extended from DanceTrack by manually annotating textual sentences. Specifically, the dataset contains 40 videos with 39 distinct descriptions for training, and 25 videos with 17 distinct descriptions for testing. The description annotations focus on motion and dressing status, e.g., “dancing person with black T-shirt and green pants” and “standing person dressed all in black”. The dataset follows the open-set setting, in which test descriptions don’t necessarily appear in the training set. Refer to Figure 1 for some visualization examples. For evaluation, HOTA series, MOTA and IDF1 are utilized to measure the tracking performance.

Figure. Visualization examples from Refer-Dance.